ETL solutions tend to be opaque to the operators running them. Any non-trivial ETL solution has multiple things it can potentially do: reset its housekeeping tables, run the test suite, import master data, execute a load phase, display its environment configuration, and so on.

ETL tools save their artifacts as plain files, entries in a repository of some sort, or they create release packages. They also provide a way to run such artifacts, optionally passing in some parameters.

From a functional perspective, this is all an ETL tool needs to do. It gives developers a way of running their flows. What else is needed when deploying an ETL project?

The problem

Suppose the ETL project can import some CSV data into its master data storage. The operations manual will often instruct the operator to run something like this:

It’s hard to see what’s even going on there. Any self-respecting ops team running that project for a while will likely come up with a set of custom wrapper scripts for common tasks.

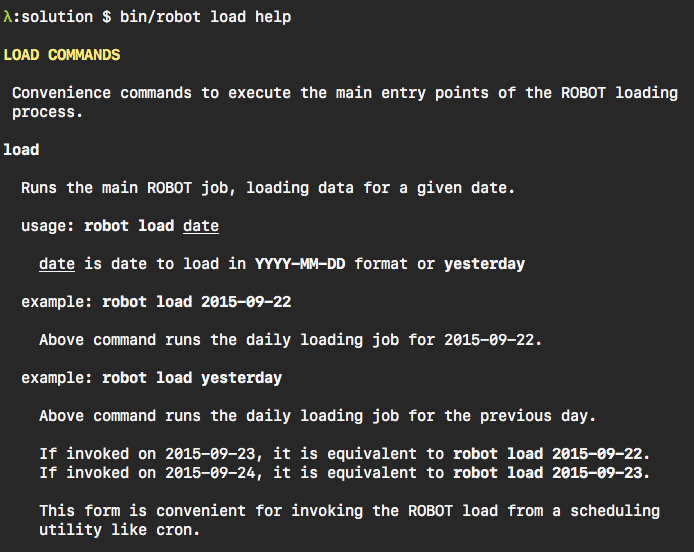

But that’s exactly what the project should offer in the first place. Something closer to this:

Ideally, invocation of the ETL project is very close to conventions already idiomatic in the production environment. Operators should not need ‘training’ in order to invoke the project’s basic functions.

An ETL project’s invocation interface should be expressed in terms of its intended use, not the artifacts of the underlying technology.

A short digression

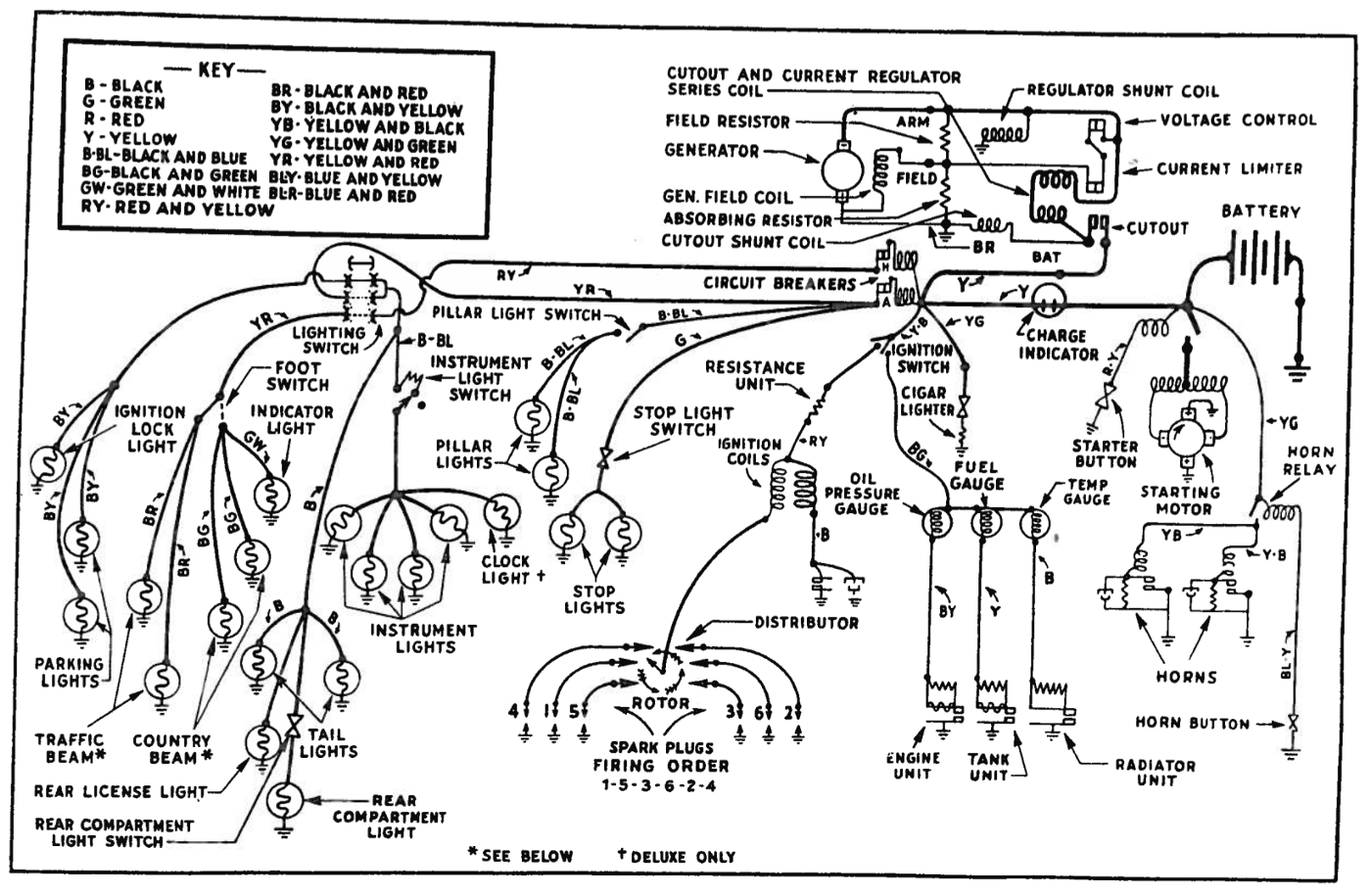

What are the steps to start your car? Press a button? Turn a key? These days it’s fascinating what the steps used to be.

The way an old-timer starts up is not intuitive. The steps a driver had to perform do not correspond to his or her intention: just starting up the engine.

Similarly an ops team can’t really be very effective if they are exposed to overwhelming implementation details, and then given instructions on how that complexity maps to their intended use cases.

Benefits of explicit entry points

Alignment of invocation interface with problem space

Having a set of entry points that are framed in terms of the customer’s problem space addresses unnecessary mental gymnastics when running the project. As a result the customer and ops team have an immediate intuitive understanding of the invocation interface.

It helps with demos and gathering feedback, too. Business stakeholders are able to follow a technical demo much better when the presenter never conceptually departs from their problem domain.

Users operate most naturally within the mental model of their use cases, not implementation architecture.

Smaller surface area

When there are explicit entry points to the ETL solution, they are the only entry points to the solution. Implementation details, the way flows are structured and composed internally, can change without affecting the outside surface of the solution. This makes refactors and architecture adjustments much less disruptive. The entry points form a contract between the solution and its users. That contract changes rarely, and that is always a conscious decision. It won’t incidentally break because of immaterial implementation changes.

Embedded documentation

Conventionally, command line utilities offer --help switches that contain some level of usage documentation. Entry point scripts for ETL projects can embrace that convention, as well. Documentation that is kept with the actual code is much more likely to be correct and up to date, as opposed to external documents. Presence of embedded documentation can be automatically tested, at least to some extent. It can certainly be a necessary checkbox in code reviews. Embedded documentation beats external documentation in every way relevant to actually running the software, and the entry point scripts are a great way to place it.

Controlling exit codes

Exposing entry points as scripts also makes it possible to provide semantically consistent exit codes. This might not be a big deal when the ETL solution is running standalone. But when it is invoked via schedulers, or composed into a bigger solution via a shell script, it is vital that it generates reliable, semantically rich exit codes. A generic runner will likely be able to indicate success or failure, but a custom script can return exit codes that encode additional information. If an orchestrating script knows the reason for failure, say, a DB being down vs. a file not being present, it might handle these cases differently.

Environment configuration

Entry point scripts have the opportunity to set up environment configuration before invoking any ETL at all. An entry point script is a great place to set and potentially validate environment configurati

It’s a good idea to have an entry point script that does nothing but show, and potentially validate, the environment configuration details it would use for invocation of actual ETL. It helps a lot when debugging an issue, and you want to be really sure, that it’s not due to misconfiguration.

Conclusion

ETL projects are software too. Like any other software, it should provide an interface that is aligned with its intended use case, not the incidental happenstance of the implementation. Explicit entry points help users understand how to use the project, and they offer additional benefits like an explicit and small public surface area, embedded documentation, control over exit codes, and the opportunity to manage environment configuration.